Province updated public procurement process days after Deloitte AI scandal exposed

Proponents and suppliers are now required to disclose any intended use of AI before the government will award a contract

Days after The Independent broke a story about the apparent use of artificial intelligence in a government-commissioned healthcare report, the province added safeguards to its public procurement process in an effort to mitigate risks associated with the use of AI.

On Dec. 2, the Public Procurement Agency “updated its contracting templates to include AI‑risk mitigation provisions,” Transportation and Safety Media Relations Manager Janelle Simms said in a statement on behalf of the agency. “Working with Justice and Public Safety and the OCIO, additional safeguards including revised solicitation templates and submission forms were implemented on January 15, 2026.” The new safeguards were first reported by the Canadian Press.

The contracting template changes were implemented just 10 days after The Independent reported the existence of fake citations in Deloitte Canada’s $1.6-million health human resources report for the provincial government, which Deloitte later admitted were the result of the use of artificial intelligence.

Two days after the Nov. 22 was published, Premier Tony Wakeham’s office told The Independent the premier had asked Government Services Minister Mike Goosney to “undertake a review of what guidelines should be put in place to stop this from happening in the future.” The Independent has requested an update on Goosney’s review but still has not received a response from his department.

Will you stand with us?

Your support is essential to making journalism like this possible.

“The Provincial Government recognizes that understanding and mitigating risks associated with artificial intelligence requires ongoing collaboration across departments,” Simms said on behalf of the Public Procurement Agency.

The Deloitte AI scandal was the second to rock the government in as many months. In September CBC/Radio-Canada reported that the province’s new 10-year Education Accord contained AI-generated errors. The report’s authors, Anne Burke and Karen Goodnough, later said the errors likely occurred within government after the researchers submitted their draft.

On Nov. 29, the government appeared to corroborate the allegation. A spokesperson from the Department of Education and Early Childhood Development told The Independent that “[t]he inaccurate citations and references generated by AI was unacceptable,” and that the government “will ensure that any application of AI within government is subject to strict review, human verification, and transparent quality controls.”

Last week Simms said a “review of AI practices is currently being led” by the Office of the Chief Information Officer, and that the OCIO’s findings “will shape the future approach to using this technology across government.” Simms also said the OCIO is “ensuring that any use of artificial intelligence within government continues to include strong human oversight and transparent quality controls.”

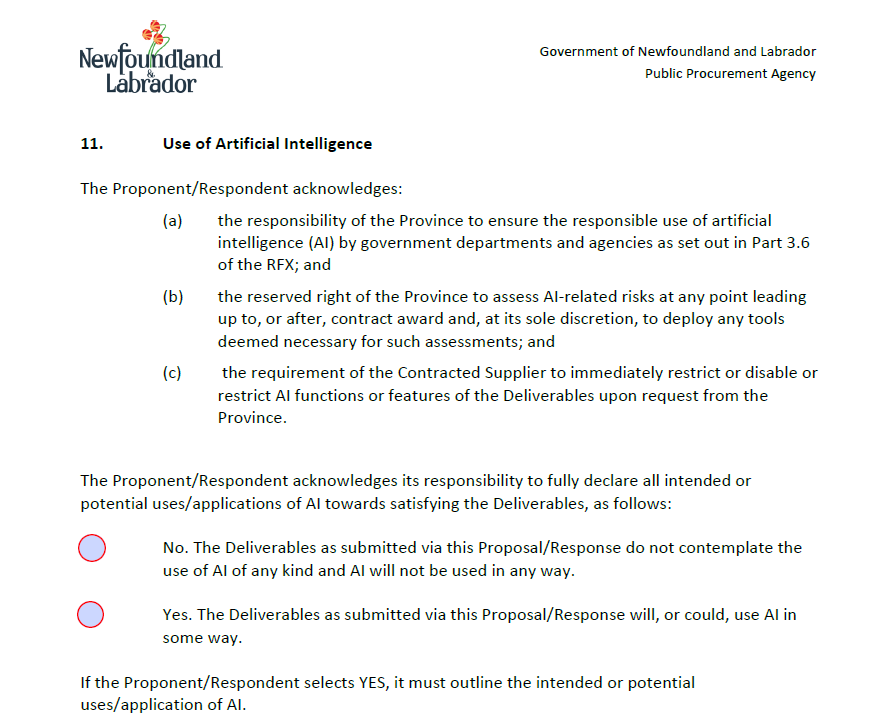

In an updated template of the government’s Request for Proposals contract, a section on the “Use of Artificial Intelligence” stipulates that procurement “deliverables” are subject to the government’s own Responsible Use of Artificial Intelligence Technology policy, and that it is “critical for the Province to understand any AI-related privacy, security, and operational implications before making an award.” The contract also states proponents “must declare” in a separate submission form “all intended uses of AI and/or machine learning with respect to satisfying the Deliverables.”

The relevant part of that form, shared with The Independent by the Public Procurement Agency, asks contracted suppliers to acknowledge that the government has the “reserved right […] to assess AI-related risks at any point leading up to, or after, contract award and, at its sole discretion, to deploy any tools deemed necessary for such assessments.”

It also requires suppliers to “immediately restrict or disable or restrict AI functions or features of the Deliverable upon request from the Province.”The form then requires proponents and suppliers to “fully declare all intended or potential uses/applications of AI towards satisfying the Deliverables.” If the proponent selects “Yes” on the form, they must “outline the intended or potential uses/application of AI.”

Minister of Public Procurement Barry Petten said last week that under the new policies the government “may investigate, approve, deny or modify a vendor’s and intended use of AI, and we may also audit the vendor’s use of AI.

“Those are big changes,” he said.

Deloitte is currently under investigation by Chartered Professional Accountants N.L. for its use of AI in the healthcare report. The firm has not responded to The Independent’s requests for comment.